What's Actually Happening in Federal Hiring Right Now?

- 83% of companies will use automated tools to screen resumes by 2025, up from just over half two years prior.

- 99% of hiring managers now report using some form of automated assistance in the hiring process, with 98% citing significant efficiency improvements.

- Applications surged more than 45% year-over-year in 2025, with approximately 11,000 applications submitted every minute on LinkedIn alone.

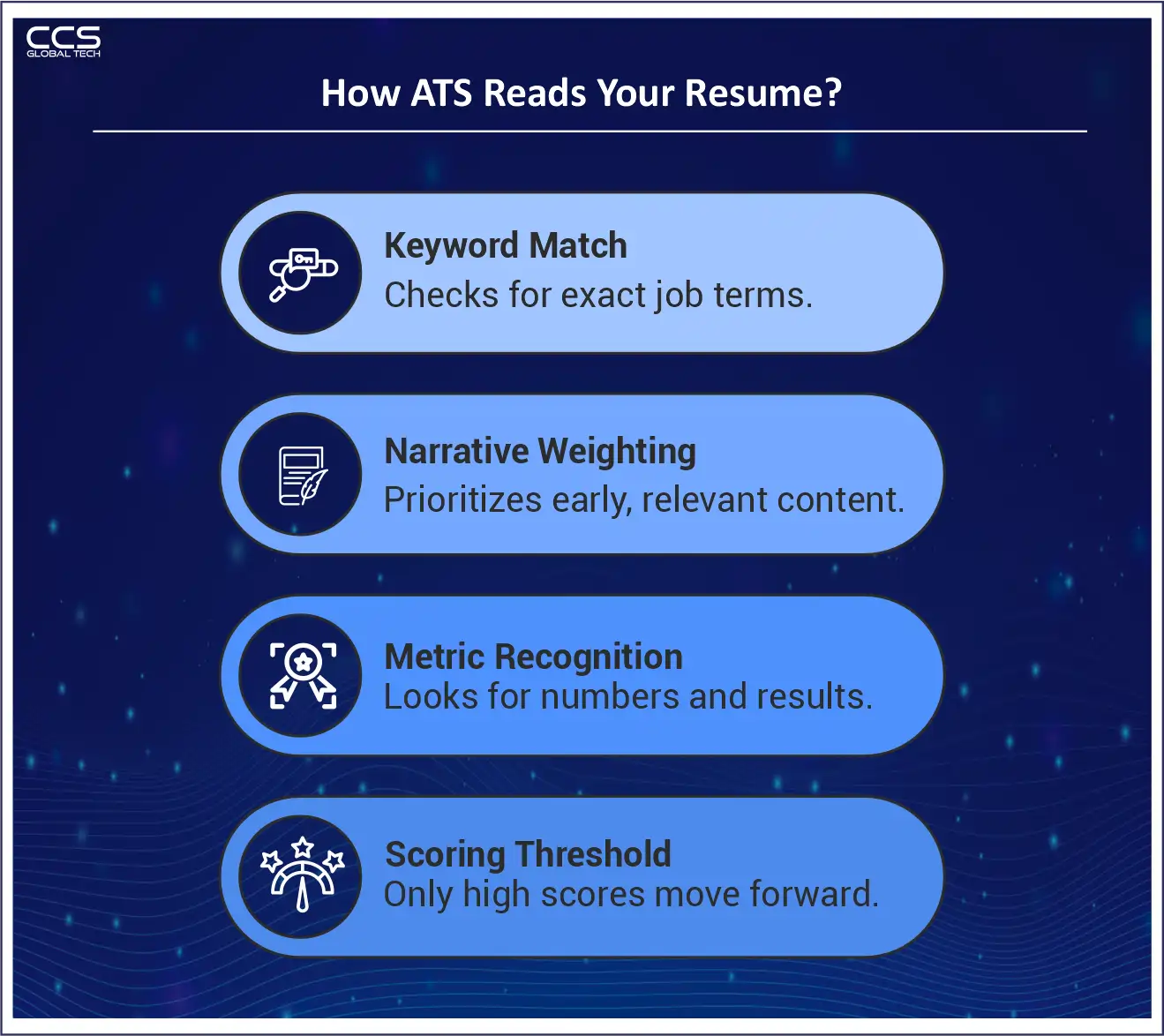

How These Screening Systems Actually Work?

Language and Terminology Alignment

Structural Consistency Checks

Semantic Relevance Scoring

The Look-Alike Problem: When Everyone Optimizes for the Algorithm

A Real-World Example: The Resume That Two Systems Evaluated Differently

One of our recent placement engagements illustrates this dynamic precisely.

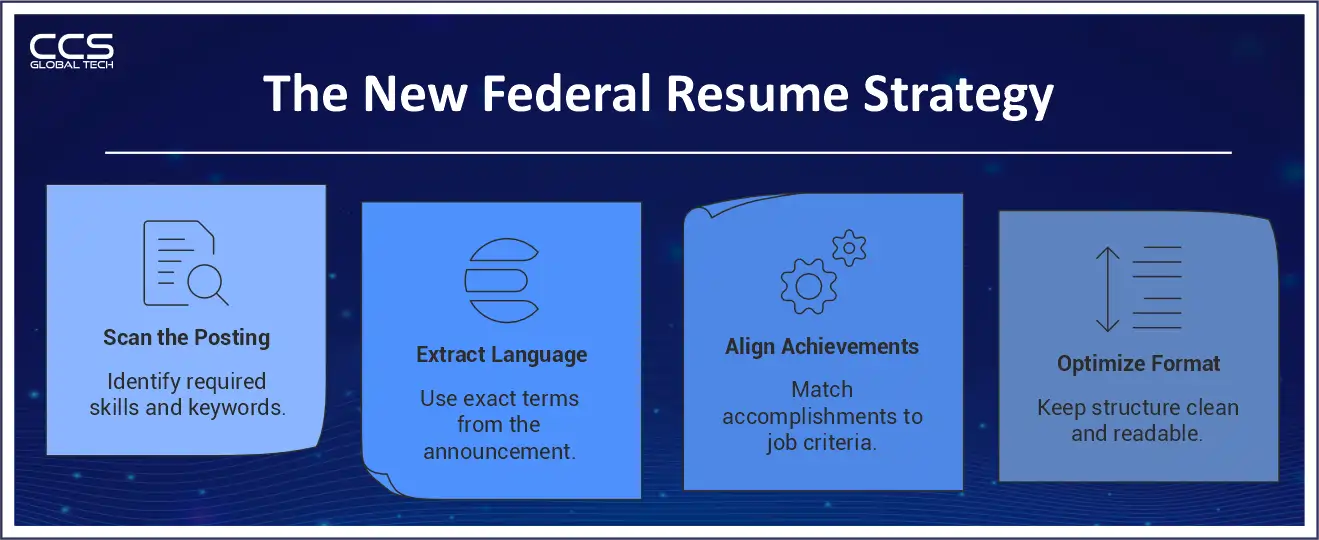

Five Strategies That Actually Move Federal Candidates Through Screening

1. Mirror the Job Announcement — Precisely

2. Eliminate Formatting That Confuses Parsing Systems

3. Lead Every Position with the Most Relevant Accomplishment

4. Quantify Outcomes Wherever Possible

5. Treat the KSAs and Self-Assessment Questions as Part of Your Resume

The Bias Issue: What Federal Candidates and Agencies Should Understand

A Federal Agency That Got the Balance Right

How CCS Global Tech Helps Federal Candidates and Agencies Navigate This Environment?

Ready to Navigate Federal Hiring with Confidence?

FAQs

Q1: How do automated screening systems filter federal resumes?

A: Automated systems compare resumes against job announcements, analyze keyword alignment, evaluate career progression, and assign a match score. Only candidates above a scoring threshold move to human review.

Q2: Why am I not hearing back from federal jobs even though I am qualified?

A: Many qualified candidates are filtered out before human review because their resumes do not mirror the exact language or structure used in the job announcement.

Q3: Do federal agencies use AI to review resumes?

A: Most agencies and contractors use Applicant Tracking Systems and automated tools to screen applications before a hiring manager reviews them. These systems prioritize alignment and relevance.

Q4: What keywords should I include in a federal resume?

A: Use exact phrases from the job announcement, including required competencies, certifications, regulations, and specialized experience. Avoid relying on synonyms.

Q5: Does resume formatting affect automated screening results?

A: Yes. Text boxes, graphics, tables, and inconsistent formatting can confuse parsing systems and reduce your match score.

Q6: How important are metrics in federal resumes?

A: Quantified outcomes such as budgets managed, percentage improvements, contract values, and team sizes improve both automated scoring and human evaluation.

Q7: What is the most common mistake federal candidates make in automated screening?

A: The most common mistake is failing to align resume language directly with the job announcement and underestimating the importance of KSAs and self-assessment responses.

Q8: Do USAJOBS questionnaire responses affect screening?

A: Yes. KSA and questionnaire responses are often scored alongside resumes. Weak or generic answers can lower your ranking before a human reviews your file.

Q9: Can tailoring my resume for each federal job increase my chances?

A: Yes. Customizing your resume to reflect the exact language and requirements of each posting significantly improves visibility in automated screening systems.

Q10: Are automated screening tools replacing human hiring decisions?

A: No. Automated tools determine which candidates move forward. Final hiring decisions are still made by human reviewers.